Low Hanging Compute

....and the Era of Technological Determinism

“If Intelligence is log of compute, whoever can do lots of compute is a big winner.”

—Satya Nadella

The assumption seems to be that more compute means more intelligence.

But history suggests technological races are rarely won by brute force — rather they are won by elegance.

Compute as Intelligence 🙈

This is probably the greatest misconception in AI — and this misconception is leading to infinite malinvestment.

AI cannot produce intelligence. All it can do is spit out responses based on prompts and instructions, using the AI models that we power it with.

Faster compute, more of it, and bigger models does not produce intelligence: All I see is a monkey that can “read” books faster, and switch between them faster.

That doesn’t mean the monkey is 1,000 Albert Einsteins or Leo Da Vincis, or that he can combine the intelligence of an Einstein or a Da Vinci…

In other words, AI scales pattern recognition but not intelligence.

Technologists tend to fall into these intellectual traps. Like economists believe in market fundamentalism, and geneticists (and others) believe in genetic determinism, so do technologists believe in technological determinism.

Technological determinism is the idea that technological progress will inevitably supersede human labor and judgment — a sort of technological inevitability.

Artificial General Intelligence (AGI) is fundamentally a technologically deterministic idea.

Real Intelligence (RI)

I asked ChatGPT: “What is intelligence?”

Intelligence = the ability to model reality and act effectively.

That’s the cleanest definition.

A bit more precise

Intelligence is the ability to:

- Learn from experience

- Recognize patterns

- Predict outcomes

- Solve novel problems

- Adapt to change

Not memorization. Not speed. Not knowledge.

Those are inputs.

Based on the above, strictly, AI cannot generate intelligence.

The Automation Everything Era

AI Labs are jumping on the vibe coding and automation bandwagon. Because remember, their raison d’être is not really to make everything more efficient.

It’s to get you to use their products — and automation seems to be all the rage these days. Claude Code this, CoWork that, Clawdbot — agents, it’s the automation everything era.

But imagine how many screw ups are going to happen because of this.

Fragility is going through the roof. But Anthropic doesn’t care, neither does Open AI — in fact, screw-up events could work in their favour. More solutions needed means more scope for them to launch and offer something new.

The joke seems to be that everyone is telling everyone else to deploy an army of agents, but that no one knows why.

The race between AI labs (Anthropic, OAI, xAI etc.) is not in becoming the most effective, efficient, profitable or most robust. The race is in getting the most users, losing the least money, and killing the competition. The race is in being seen as the best, at least for now.

Perception is reality. And optimising for perception leads to different outcomes than optimising for efficiency.

AI Agents: the Big Demand Driver

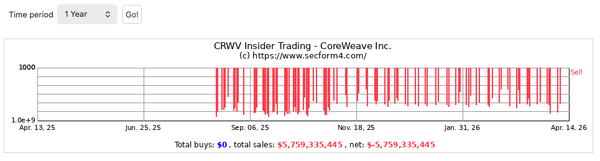

Those arguing for more compute, thus vindicating obscene capex programs, also argue that those who command compute will be the masters of the universe.

They argue that the ROI is there, and that these management teams aren’t stupid and know what they are doing.

But Big Tech is in on it too. They are benefitting from trillion-dollar rallies in their valuations, amassing power, money and capital in the process. They are the process — and they want it to keep going for as long as possible

Microsoft and Google are paying influencers big money to appear to be using their tools. Why? Because that’s where the money is.

They must show growth in those product lines, or else the narrative dies. Again, perception is reality.

No matter if no one really uses them, no matter if corporates are forcing their employees to use their AI tools while no one really does. What matters is the now.

Short-form content — but for the economy.

“When the music stops, in terms of liquidity, things will be complicated. But as long as the music is playing, you’ve got to get up and dance.”

—Chuck Prince, CEO of Citigroup, 2007

Many ask when the AI hype will stop.

- When they see the ROI?

- When they admit to not seeing the ROI?

- When Open AI can’t raise the money?

I don’t think that’s where we should be looking for the answer. There is not much logic or reason in this process, so why look to reason?

The guiding light for what the market will do is the market itself. When the market decides that Microsoft or Amazon are making the wrong moves, they will eventually stop.

They might stop to appease the market or they might stop because they can’t raise the money no more (i.e. the market was the enabler) like in the case of Oracle.

The point is, the market will make them stop. The market will inflict the pain required to discipline the actors involved.

Microsoft is down 30% since October and Oracle is down 60% since September.

Market fundamentalists believe that the market, left to its own devices, will solve every problem magically. The “invisible hand” as we were taught in Economics 101.

Soros argued against that, and economic history proves that it does not hold true.

It is however the market mechanism that will abort this process. Maybe then some will use that as an excuse to argue for market fundamentalism.

But regardless, producing or corroborating economic theory isn’t our goal — operational success is.

Cracks Showing

Markets already aborted Oracle’s expansion, and slapped Microsoft and Amazon in the face. Remember, we never know what will happen beforehand. We are just following the process as it evolves and unfolds.

But for now the damage done is notable — and it could fester and gain momentum.

For now, Big AI is resolved in following fully through their AI aspirations in the broader AI race.

The market recently bid Google shares up 100% after Google’s successful Gemini and TPU launches. But everyone else is doing that too — ASIC-type chips weren’t invented when Google launched their TPU, and Chatbots will continue to lose money even after the Gemini launch.

In fact, Chatbots have a very low chance of surviving as a stand-alone business. The forces of creative destruction and a race to the bottom will commoditise chatbots and forcibly put them in a bundle.

Google is already doing it with their Google AI Pro bundle — some 22 euros a month and you get Gemini and 2TB of storage space. This is one way Google is fighting OpenAI, art of war style.

The hype around chatbots is already tapering, with enthusiasts reducing their usage materially as the life cycle progresses. At one point I paid for both Claude and ChatGPT, I recently cancelled my ChatGPT subscription partially because I get Gemini for free and partly because Sam Altman shouldn’t be given any more power. Not that the rest are any better…

Chatbot usage depends on individual users, but for me, the hype is tapering. I might even cancel Claude and keep Gemini there for whenever I need a chatbot. See? It’s already happening.

I still enjoy Claude, but I don’t want to get too used to a chatbot life. A few days ago, Anthropic made an issue of foreign labs stealing their models via a distillation attack — basically reverse engineering their models.

Well, LLMS are literally built on everyone else’s work. Anthropic didn’t seem to mind then — the double standards are obvious.

“Open internet for me, but not for thee.”

A few days ago Sam Altman related an AI model to a human — going on to compare the energy required for a human to grow, learn and be useful to the economy with the energy required to “train an AI model”.

And he used that analogy to conclude that AI models are more efficient, conveniently ignoring that the AI model is training on human-generated data.

This line of reasoning is dangerous and falls squarely under the idea of technological determinism.

The Squeeze: Economics 101

When the market finally decides to bid down AI valuations (i.e. the bust), you will see a dismantling of the AI state of affairs as we know it today. Compute will no longer be subsidised for free to the consumers that waste it — even while inference becomes cheaper and cheaper.

The truth is, compute isn’t being subsidised because they love us. Jensen wants to show growth, and those financing OpenAI are doing it for the main event: The Open AI IPO.

OpenAI is gunning for a $1 trillion, and those funding OpenAI’s losses wouldn’t have it any other way.

But even at a ~$1 trillion valuation it would still be loss making — with chatbot competition heating up and chatbot economics further heading south.

People assume that chatbot subscription prices or API calls will stay fixed as compute costs crash to the floor. No, chatbot prices are also on a secular downswing.

What matters is the margin Compute Merchants earn — after costs to train their models and keep up with the LLM race of course.

LLM differentiation is converging and pretty soon many users will either have 1 chatbot subscription that they pay for, 1 chatbot subscription that is bundled for free or 0 chatbot subscriptions.

Ignorance of Higher-Order Effects

Some seem to believe that AI proliferation will drive record corporate profits and productivity — but that ignores higher-order effects.

Warren Buffet explained it simply with the “standing on your tiptoes” analogy. Where in the first order all textile mills kept ordering the newest mill to increase productivity and profits.

But the second-order effect was that everyone did the same and productivity converged across all producers. In the end, the capex for those new mills barely paid for themselves.

In the case of AI proliferation today, compute will be abundant and cheap. Everyone will be able to run models and agents cheaply, from their own home if they wanted to.

The owners of compute do not have or own something special — and compute does not equal intelligence.

The Efficiency Layer: from Brute Force to Elegance

As the technology develops and progresses, less absolute compute will be required for the same workflow. Efficiencies stem from all layers in the stack.

- The Chips are getting more efficient (more compute per unit of cost)

- The Models are getting more efficient (more work per token)

- RAG and MCP are compute-efficiency architectures that will be increasingly used in the AI-tool stack (more work per token)

- As Models get better — much less compute will be wasted on wrong answers, wrong images, mistakes etc. (more work per token)

All the above together can reduce raw compute costs per workflow by multiples. By that time, compute will be cheap, abundant and ubiquitous.

Obsessing over raw compute and copious amounts of it is a brutal way of solving the issue at hand. It’s a linear approach to a complex problem.

The money is not in brute-force compute supply but in elegant solutions.

Agents and Software Companies

There seems to be the guaranteed notion that AI labs will control AI agents and their massive proliferation going forward.

IBM crashed 10% because Anthropic said that their models can now work with COBOL. The market is jumping from A to Z on just one announcement, but life isn’t that simple.

Especially when you are dealing with globally-installed bases, multi-decade relationships, millions of lines of code, legacy systems, complex workflows and risks that are worth a million times the savings you think you will get — when those risks eventually blow up.

Why aren’t companies like IBM or Duolingo better suited to use AI technology to their advantage?

Why will it be someone new that will use the powers of LLMs to vibe code a substitute and somehow magically engineer a working version — and then capture the whole market via the distribution he doesn’t even have?

And why will users flock to this magically-produced competitor? Simply a better price?

Well, if the input costs required to build and maintain software drop — the first who will benefit from those cost savings are the incumbents, with their selling prices falling as a response to their reduced overhead.

Linear extrapolation is the culprit here, and everyone is jumping on the bandwagon.

The absurdity of the whole situation is encapsulated by this tweet.

Network-Effect Moats

DoorDash and Uber Eats

In the case of platforms like DoorDash, Uber Eats or even AirBNB — the value is ultimately in their network effects. A seller, a buyer, an advertiser or a gig worker goes to platform XYZ because everyone else is already there. The moat is not in the code or even the infrastructure, but ultimately in the network effects.

This does not mean the moat is unbreakable — it just means that the future of the business lies in its network effects, and how much value it can extract from said network.

When buyers, sellers and gig workers start flocking to other networks en masse — you know the incumbent networks are getting disrupted.

Visa and Mastercard

In the case of card networks like Visa and Mastercard — the moat is neither in the card-reader technology nor in the code that powers the network.

Again, it is the network itself that creates the value. The buyer having a Visa card and being able to use it in a store that takes Visa is the whole business. I agree, the network can’t run without the devices or the code — but that infrastructure on its own does not a Visa make. And you can’t vibe code that on Claude.

But at the same time, this does not mean they are marked safe from disruption.

Card networks like Visa and Mastercard are getting disrupted by instant payments, open banking and the proliferation of Neobanks and payment systems that dis-intermediate the need for card networks.

Why go through a card network when you can transact cheaply, instantly and without friction anyway?

Apple’s Meta-Game

Apple was bashed on by the market and labelled as an AI loser in the hype time of AI adoption (refer to the Techno-Imperial Cycle), but they resisted the market’s call to spend all their stash in Data Centers.

Instead, they took their time preparing their ecosystem for AI adoption and found the models and infrastructure partners they best wanted to work with (i.e. Google). Apple understood that the value will be derived from the Interface layer — and not the Model or Infrastructure Layer in the AI Tech Stack.

Now, instead of bashing on Apple for being late to the AI Game and having to make a big purchase like buying Perplexity, the market is now praising Apple for letting Google take the capex hit while they print cash.

Even Dan Ives, a man who went on CNBC multiple times to talk about Apple’s “Blackberry Moment” is now saying 2026 is Apple’s year to shine.

You can read about it here.

anti-Determinism

Negative capability is the capacity to hold opposing ideas in your head without rushing to adopt the one or the other. Deterministic reasoning is the trap you want to avoid.

AI being a transformative technology does not mean outcomes must either go to 0 or 1,000X.

Reality is rarely binary.

Philo 🦉

Relevant Links

The AI Compute Paradox. Video here.

How OpenAI will die. Video here.

Essay sponsored by cotraderapp.com